- AI with ALLIE

- Posts

- The AI Job Threat: What to Know and What to Do Right Now

The AI Job Threat: What to Know and What to Do Right Now

I read both crisis reports cover to cover - here's the playbook

AI with ALLIE

The professional’s guide to quick AI bites for your personal life, work life, and beyond.

A Substack post crashed the stock market in late Feb. Then, one of the most powerful financial firms in the world fired back. The AI conversation is coupled with both speed and volatility, and the people who need to be paying attention are probably not reading either one.

So I'm going to break both down for you. Not just what each side says, but the assumptions underneath their arguments. Because as Adam Grant tells us in Think Again, assumptions should be challenged before conclusions.

This article covers:

Authors

Assumptions on job displacement

Each side’s predictions

Where they agree, where they disagree

The one thing I think both sides miss

The exact next steps to take

Who Wrote These AI Takes?

The doom scenario is from Citrini. Called "The 2028 Global Intelligence Crisis," it was written by two people: James Van Geelen, CEO of Citrini Research (a macro analysis Substack), and Alap Shah, an AI entrepreneur and former Citadel analyst who now runs an AI assistant company called Littlebird. It was published on Substack. It is speculative fiction framed as a financial memo written from the future (June 30 2028). It is not a forecast - the authors said so themselves. But it spooked markets hard enough that IBM dropped 13% and Michael Burry (Christian Bale's character in the Big Short) amplified it on X.

The rebuttal, "The 2026 Global Intelligence Crisis," was written by Frank Flight, a macro strategist at Citadel Securities (Ken Griffin's market-making firm). It was published as an institutional research note with access to real-time labor market data, Fed surveys, and economic tracking tools. It is grounded in current data, not a projection.

Assumptions on Job Displacement From Each Firm

This is the part most takes skip - don't skip assumptions! The upstream assumptions almost matter more than the conclusions, because they're where you can actually form your own opinion.

Citrini’s Assumptions

Remember: Citrini is the doom scenario from June 2028.

AI capability will compound rapidly. They assume that because AI can improve its own code and capabilities, the pace of improvement will accelerate in a way that's fundamentally different from past technologies.

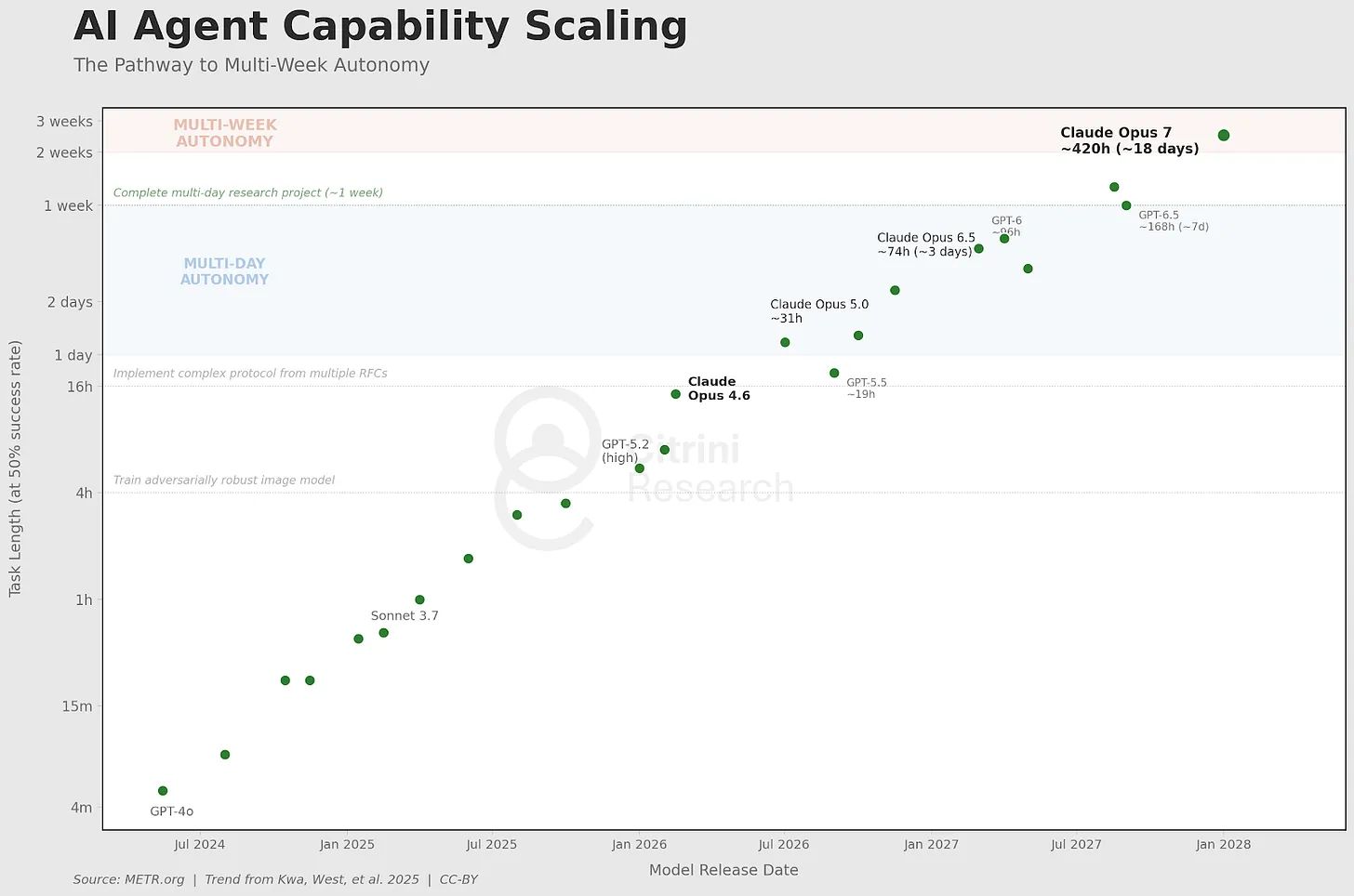

METR research on agent capabilities (cited in Citrini) - the length of human task that an AI can take on, imagined until Claude Opus 7. Allie note: I always look at both the 50% and 80% success rate, not just 50%.

White-collar work is the most vulnerable. Their scenario specifically targets knowledge workers (think: software engineers, financial advisors, consultants, middle management), arguing that AI hits these roles harder and faster than blue-collar work.

The economy is built on friction, and AI removes friction. Their core insight is that much of what companies charge for is based on things being slow, complicated, or requiring human coordination. If AI makes those things fast and cheap, entire business models collapse.

Screenshot from Citrini report

Institutions will be too slow to respond. They assume that governments, regulators, and social safety nets will not adapt quickly enough to offset the displacement and that no meaningful fiscal response happens between now and 2028.

Income destruction leads to demand collapse. If high-earning knowledge workers lose their jobs, they stop spending. Vacations get canceled. Mortgages default. Tax revenue falls. The circular flow of the economy breaks (i.e. the economy works because people earn money and spend it, that spending becomes someone else's income; if AI cuts out a large chunk of earners, the spending stops, and everything downstream from it — retail, housing, government budgets — starts to crack).

“A feedback loop with no natural brake”, Citrini Research

Citadel’s Assumptions

Remember: Citadel is the non-doomer forecast based on today's data.

Current data is the best predictor of near-term outcomes. They ground everything in what the numbers say today: unemployment at 4.28%, software job postings up 11%, new business formation surging.

Citadel reports on software engineer jobs versus overall job postings.

Technology adoption follows historical patterns. They assume AI will diffuse through the economy the way PCs and the internet did, following an S-curve (an S-curve means adoption starts slow, accelerates in the middle as costs drop and infrastructure builds out, then flattens as the market saturates and the easier gains are captured. It looks like an S when you graph it. Nearly every major technology in history has followed this pattern, just different slopes.)

Physical constraints create natural brakes. Data centers take years to build, energy supply is limited, and compute costs rise as demand increases. Citadel believes these physical realities will slow down the pace of AI displacement. They make a specific point here: if you tried to automate white-collar work at the pace Citrini describes, the demand for compute would spike so dramatically that compute costs would rise above the cost of just paying a human to do the job. At that point, companies stop substituting AI for humans. There's a ceiling on how fast displacement can happen, and it's set by physics and economics (not just AI's insane benchmarks).

Productivity gains create new demand. When things get cheaper to produce, people consume more, and entirely new industries emerge. This has happened with every major technology in history. Citadel backs this up with Keynes: in 1930, he predicted that productivity growth would be so powerful that by the 21st century we'd only work 15 hours a week (let's all collectively laugh together...ready? HA!). He was right about productivity. He was massively wrong about what people would do with it. Instead of working less, we consumed more. Citadel argues Citrini is making the same mistake - underestimating how much humans will find to want once things get cheaper (this is known as Jevons paradox - ie when a resource becomes more efficient to use, people use more of it, not less. I swear every single Silicon Valley event I go to someone mentions Jevons paradox on stage at least once).

Enterprise adoption is the relevant measure. Their diffusion data tracks how organizations and working adults are using AI. If that rate isn't spiking, displacement isn't imminent.

Democratic governments will intervene. They assume that if displacement gets bad enough, fiscal and regulatory policy will step in to offset the worst outcomes.

What Each Side Actually Predicts for Our AI Future

Citrini's Scenario (looking back from fictional June 2028)

They say: the S&P 500 falls 38% from its late-2026 highs. Unemployment hits 10.2%. White-collar job openings collapse. SaaS companies face mass cancellations as AI lets companies build tools internally. The $13 trillion residential mortgage market cracks as high-earning tech and finance folks default. AI creates some new jobs, but they pay a fraction of what the old ones paid. Tax revenue plummets because the government's revenue base is built on taxing human income. GDP still rises on paper, but the gains flow to the owners of compute and AI infrastructure rather than to us/households. They call this "Ghost GDP", Singapore and others call it "jobless growth" — it's where the economy looks like it's growing, but the money isn't circulating through the people who used to earn and spend it. It shows up in national accounting balance sheets but it doesn't show up in anyone's paycheck or pocket.

Citadel's Position (grounded in February 2026 data)

They say: there is no evidence of imminent labor displacement. AI adoption at work is stable, not accelerating. The economy is adding jobs, not losing them. Data center construction is creating a construction hiring boom. New business formation suggests entrepreneurship is expanding, not contracting. AI is a productivity shock similar to past technologies and will ultimately expand total economic output. They make the economics argument that a productivity shock is a positive supply shock, meaning it lowers costs and expands what the economy can produce. If companies produce more at lower cost, more of that thing is out in the world, so prices fall, which increases our ability to buy the thing, which generally increases consumption. For Citrini's demand collapse to happen, you'd need labor income to fall and nothing to compensate for that dip aka no new investment, no government transfers, no new industries absorbing workers. Citadel argues that all of that happening at once is a near impossibility.

New business formation rapidly expanding

New Business Applications, Citadel Securities, US Census Bureau

Where Citrini and Citadel Agree

Both reports acknowledge that AI will change the composition of work. Neither says "nothing will happen." There's no la la land of "AI isn't that good". Citadel explicitly states that AI will alter demand composition and generate new industries, just as the internet did. Both acknowledge that the AI capex cycle is massive ($650 billion, 2% of GDP, ~2800 planned data centers). Both recognize that compute and energy are real constraints. Citadel suggests AI might be "just enough to offset" the headwinds of aging populations, climate change, and deglobalization.

Where Citrini and Citadel Disagree

Timeline. Citrini thinks disruption could become intense within two years. Citadel thinks the adoption curve is much slower than that.

What to measure. Citrini focuses on capability and potential. Citadel focuses on current adoption data. (Allie note: always look at velocity, not just current data points!)

Who gets hurt. Citrini says white-collar workers and the entire downstream economy. Citadel says the data doesn't support that claim (yet).

Whether the system self-corrects. Citadel believes markets, governments, and institutions will adapt. Citrini believes the speed of AI will outrun our ability to respond.

So I know what you’re probably thinking: this is scary - what do I do?

First thing’s first…

A Quick Note Before I Give You My Take

There are serious economists going deep on this debate right now, and I want to flag that. I'm not an engineer and I want to state clearly: I'm also not an economist. I'm not going to pretend to be one.

What I am, however, is an AI business advisor to some of the largest and most prominent companies and CEOs in the world. I am someone who is in rooms where these reports are being discussed — in meetings with financial institutions, with Fortune 500 leadership, with the people making the capital allocation decisions right now. These are not abstract thought exercises for me nor the people I work with. They're inputs into real strategy and money conversations happening behind closed doors.

One thing I've learned is that the media almost never gives you a good sense of what those closed-door conversations actually sound like. The public narrative is always cleaner, more binary, more confident than the private one. That's what I want to bring here - not an economist's deep dive, but an honest read from someone who is watching the ideas in these reports collide with real decisions in real time.

What Neither Report Talks About

We've had radical pendulum swings in AI sentiment (and spikes in capability) over the last few months, and really the last few weeks. One week everything is fine, the next week someone publishes a scenario that tanks the market. Both of these reports were written in the span of days. The conversation is moving incredibly fast and I genuinely feel the ground is shifting underneath all of us.

The one thing that tips me more toward the Citrini side, even though I think their timeline is aggressive: neither report talks about what I'd call self-embedding AI.

Everyone is focused on self-learning (which I believe is going to happen more this year, as I shared in my 2026 AI predictions). Self-learning is when the model itself gets smarter, more capable, learns to use new tools, figures out new reasoning strategies. But that still doesn't solve the adoption problem. Someone still has to put it into systems, connect it to tools, integrate it into workflows, deal with SAP and Salesforce and Databricks, train people on how to use it, discover new use cases, get the budget approved, navigate procurement, and convince lawyers to not block it—and that's the tip of the iceberg! That whole layer of enterprise adoption is constrained by deeply human things: organizational speed, psychology, change management, internal politics, financial bureaucracy, and homosapien-grade learning curves.

Citadel's entire argument rests on the fact that this layer is slow. And historically, sure, they're right. Technology adoption follows an S-curve because humans are the bottleneck.

Adoption of tools over time, from Epoch AI

But neither report addresses: what happens when AI starts being used to accelerate its own adoption?

That's what I mean by self-embedding. Not the model getting smarter. The model getting itself into more places, faster. That AI would help companies integrate AI, reduce the cost of connecting systems, shorten the learning curve, build automations it discovers, and maybe, just maybe, convince a general counsel to take its side. (To my lawyer reading this, I deeply appreciate you, it's just very easy to make lawyer jokes.)

Self-learning changes the capability curve; self-embedding changes the adoption curve.

Everyone is so focused on AI being used to improve the model itself that they're ignoring AI being used to improve enterprise adoption itself. Self-learning changes the capability curve; self-embedding changes the adoption curve. And if the adoption curve is the main thing Citadel is relying on to assume the disruption is slow, and AI starts bending that curve faster, then the S-curve model literally shatters.

Your Next Steps, No Matter Which Side Is Right

These apply whether AI disruption hits fast or slow. They put you in a strong position either way.

Start using AI tools now, seriously. Not casually. Build real workflows. The delta between people who use AI as a toy and people who use it as infrastructure is widening every week. Whichever scenario plays out, the people who understand these tools will be in a much better position.

Diversify your income and your skills. If you earn 100% of your income from one type of knowledge work, you have concentration risk. Add adjacent skills. Build something on the side. Create an asset that isn't purely your time.

Pay attention to what AI can't do well (yet), and get good at that. Judgment, taste, knowing what good looks like, relationship-building, physical-world problem solving, navigating ambiguity, leading through uncertainty. These are the areas where humans still have a meaningful edge.

Reduce your fixed costs. Both scenarios involve economic turbulence. Lower your monthly burn. Build a bigger financial buffer. Don't take on new debt calibrated to the assumption that your current income is permanent.

Track and follow infra investments. Watch what's being built (data centers, energy projects), who's financing it, and on what timeline. These are forward-looking bets that tell you where the money thinks the world is going, plus because they take so long, you can see changes a few years out.

Talk to people outside your industry. The impacts of AI, as I've personally seen over the last decade in my work, will hit different sectors at different speeds. If you only talk to people in your field, you'll miss the signals coming from adjacent industries.

If You Think Citrini (Doomer) Is More Accurate

Move aggressively to learn AI at an operational level. Learn how to build workflows, automate processes, and manage multi-agent teams. Become the person who knows how to use AI to replace functions, because that makes you the person companies need to hire, not fire.

Rethink career bets in pure knowledge work. If you're early in your career and fully invested in a role that is primarily information/computer-based, explore roles that combine those skills with something harder to automate, like physical work, regulatory expertise, human relationships.

Do industry-specific stress tests. For example, if you're in banking or lending, you'll want to stress test your portfolio against the assumption that borrowers in tech-heavy zip codes stay employed at current income levels. If you're in the energy/utility sector, you'll want to pay attention to the fact that AI infrastructure is accelerating energy demand at the same time that displacement could increase demand for social services.

If You Think Citadel (Grounded) Is More Accurate

Stay invested. Citadel's view implies that the AI capex cycle is inflationary and growth-enhancing. If they're right, selling into AI panic is the wrong move (this is not investment advice).

Lean into enterprise AI adoption. If the S-curve model is correct, we're still in the early-to-mid acceleration phase. Companies that integrate AI effectively now will have a meaningful competitive advantage as adoption matures. Could also pivot into this space for work.

Focus on building, not defending. New businesses and new industries will form rapidly in the Citadel scenario. Instead of worrying about displacement, look for the new categories that AI is creating and position yourself there. And, if displacement is slow and manageable, deepening expertise in your current field still pays off.

Watch for the data to change. If the adoption surveys start showing a spike in daily AI use for work, or if software job postings reverse, their S-curve thesis weakens. Stay close to the data.

Both reports are worth reading in full:

Citrini Research: "The 2028 Global Intelligence Crisis"

Citadel Securities: "The 2026 Global Intelligence Crisis"

This was a long one. But an important one. Throw it into AI, ask it to summarize it, ask it to compare it to your strategies, ask it to compare it against your career context doc, ask it to make it into a presentation, ask it to turn it into a dynamic spreadsheet or an infographic or a short email to send to your team. Turn it into action.

Stay curious, stay informed,

Allie

NEW LIVE AGENT LEARNING: AI MASTERMIND

Whichever side you lean towards, I want to help you start using AI tools now, seriously.

That’s why I’m exploring a multi-week AI Agent Mastermind for business professionals - not a quick overview, but a weekly deep dive into how to actually build agents, gain leverage, and even make money with them.

This is for the professionals who are done watching AI happen from the sidelines and are ready to build.

Want to be first to hear about the Agentic AI Mastermind? |

Feedback is a Gift

I would love to know what you thought of this newsletter and any feedback you have for me. Do you have a favorite part? Wish I would change something? Felt confused? Please reply and share your thoughts or just take the poll below so I can continue to improve and deliver value for you all.

What did you think of this month's newsletter? |

Learn more AI through Allie’s socials